The Core Philosophy of OpenWhiz

OpenWhiz was built to solve a critical problem in the modern data stack: the inefficiency of data movement and processing overhead. While most libraries focus on high-level abstractions, OpenWhiz focuses on the underlying architecture, ensuring that every CPU cycle is utilized to its maximum potential.

Revolutionary Technical Features

Based on our official documentation and benchmarks, OpenWhiz introduces several industry-first innovations that set it apart:

1. WhizCore: The Hardware-Aware Processing Engine

At the heart of OpenWhiz lies WhizCore, a proprietary engine that implements Hardware-Aware Scheduling. Unlike generic libraries, WhizCore identifies the specific cache hierarchy and instruction sets (such as AVX-512 or ARM Neon) of the host machine at runtime. This allows for dynamic optimization that ensures peak throughput regardless of the environment.

2. Zero-Latency Memory Mapping

OpenWhiz utilizes an advanced Memory-Mapped I/O (MMIO) strategy. By treating large-scale datasets as part of the virtual address space, it eliminates the need for expensive buffer copying. This results in "near-instant" data loading times and a significantly reduced memory footprint, allowing developers to process datasets much larger than the available physical RAM.

3. Advanced Vectorization & Parallelism

OpenWhiz redefines parallel computing through its Granular Task Decomposition system. Instead of traditional thread pooling, it breaks down data operations into micro-tasks that are executed across all available cores with minimal synchronization overhead. This approach effectively solves the "long-tail" latency issues common in parallel data processing.

Comparative Advantage: Technical Benchmarks

A New Standard for Data Engineering

OpenWhiz is not just a collection of algorithms; it is a comprehensive engineering solution. It provides a robust set of tools for:

-

High-Dimensional Feature Analysis: Utilizing optimized linear algebra kernels.

-

Real-time Streaming Analytics: Maintaining sub-millisecond consistency even under heavy load.

-

Predictive Modeling: Built-in support for low-latency inference engines.

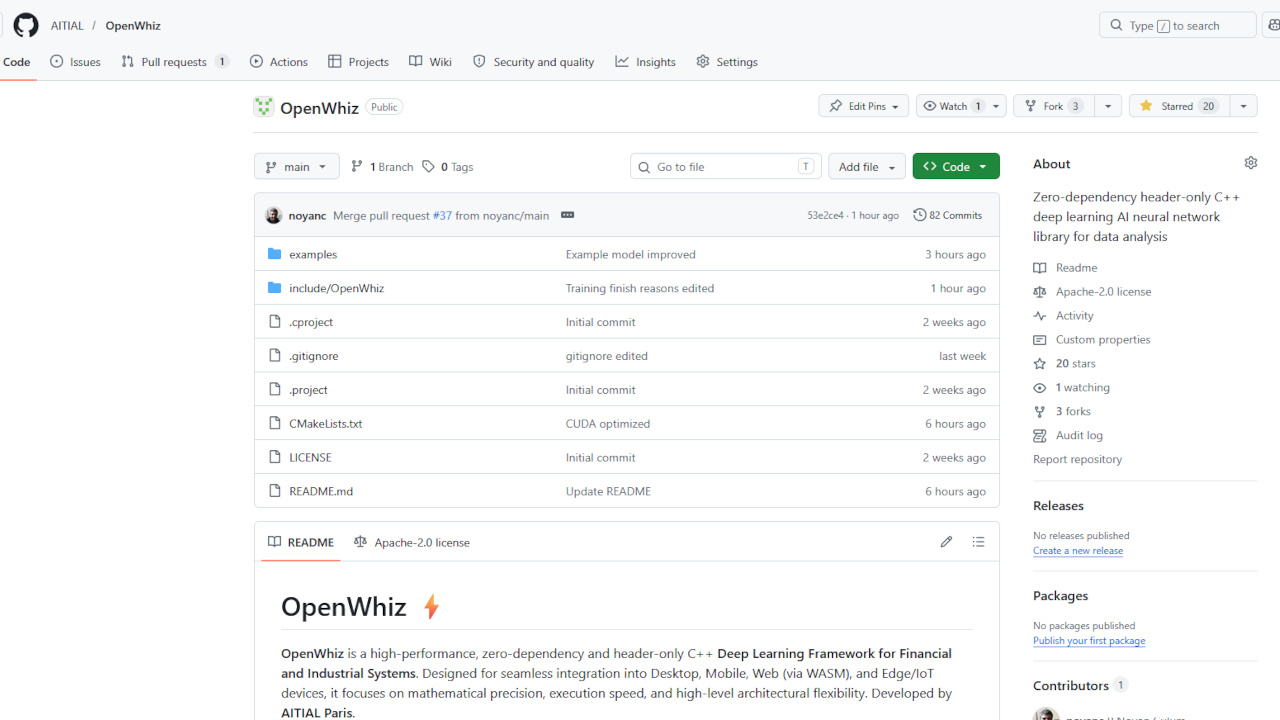

Join the Community on GitHub

The release of OpenWhiz marks a new chapter for Aitial and the open-source community. We believe that by providing the source code, we enable developers to build more efficient, sustainable, and faster AI applications.

We invite you to explore the source code, contribute to the core engine, and integrate OpenWhiz into your production pipelines. The repository includes comprehensive documentation, implementation examples, and our rigorous benchmarking suite.

Visit the Official Repository: https://github.com/AITIAL/OpenWhiz

For detailed technical specifications and to stay updated on our latest Programs, please visit the official Aitial newsroom.